I was reading this study on the efficacy, safety, and lot-to-lot immunogenicity of BBV152 (aka Covaxin). Covaxin is an inactivated virus-based vaccine similar to the polio vaccine. I am quite indifferent on this particular vaccine; frankly, beyond this study, I do not know anything about it. The study, as can be expected, makes the vaccine look promising, but it is not particularly useful to read pharmaceutical studies on the surface these days.

For example, there are no serious safety concerns — the control group had more adverse events than the treatment group. That makes me wonder if the groups were properly randomized (for example, the placebo group was nearly twice as likely to have severe obesity) and adverse events properly recorded, but I do not want to speculate too much on the safety data. Indeed, despite the title of the paper, neither did the authors:

“The study was not powered to find safety differences and the sample size was based on the power to determine efficacy, no meaningful safety differences were observed between the groups.”

Let’s pretend for a moment, the authors were entirely forthcoming in their representation of the data.

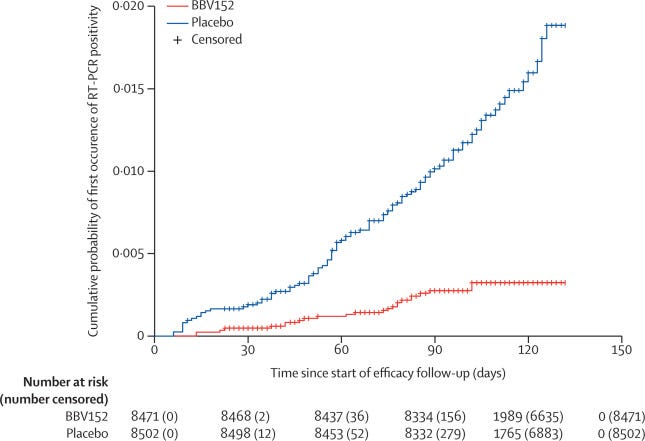

As such, they got a nice Pfizer, “nail in the coffin” graph:

That is relatively impressive and works out to 77.8% efficacy (about 10% less for symptomatic cases). Of course, the follow up period started 14 days after the second dose (day 42 of the study) as usual. Before that point, the vaccine is less impressive. Between the first and second dose, the vaccine efficacy was -73.3%. Ouch. To make up for that, one would have to be well into the second dose before reaching the break-even point.

Update: While I do not have the underlying data here, it appears that the break-even point did not occur until day 80 of the study (38 days after the second dose). The differences remained negligable until around day 92. I imagine that controlling for time could have made the break-even point a few days earlier. I have touched on break-even points in the past and will do a full article on break-even points sometime in the future, so don’t worry if you don’t understand what I mean.

Thanks to the recruitment period for the vaccine being in between waves (recruitment occurred between November 16th and January 7th) and the latter part of the study (when the vaccine should be most effective) being mid-wave, the overall VE ended up being 57.8% (not reported). If controlled by age (ie. the people that need protection from the virus), this number would almost certainly be even lower (dose 2 plus 14 days VE in those 60 and older was only 67.8% with an extremely wide CI).

But one has to wonder if the vaccinations began during the wave, how would it affect outcomes? This is pretty important since policymakers in many countries, such as Canada, are prone to double-down on vaccinations mid-wave. If the VE is negative before the second dose plus 14, then idiotic policies like that would drive cases. This was witnessed in Alberta and Saskatchewan during the fall where the mRNA vaccine campaigns had consistently negative vaccine effectiveness for a prolonged period of time for people taking the first dose, leading to an increase in overall cases that would likely not have existed without poor public health incentives.

In any case, the study is a good reminder that even with a tightly monitored population, the virus remains extremely rare. Out of 24,419 over a period of six months that coincided with India’s largest wave, there were only 684 suspected cases of the virus. Only 180 tested positive with a PCR test: 9 of which, the authors concluded did not meet the definition of a case.

Thus, if a vaccine does not provide either long term protection or no serious safety signals, it is a failed vaccine. So far, every vaccine has failed the test. Will Covaxin be next?

Do we know yet whether original Covaxin or the Oxford-AstraZeneca Covishield was most responsible for the development of Delta variant in India, before kindly sharing it's mutants with the world?